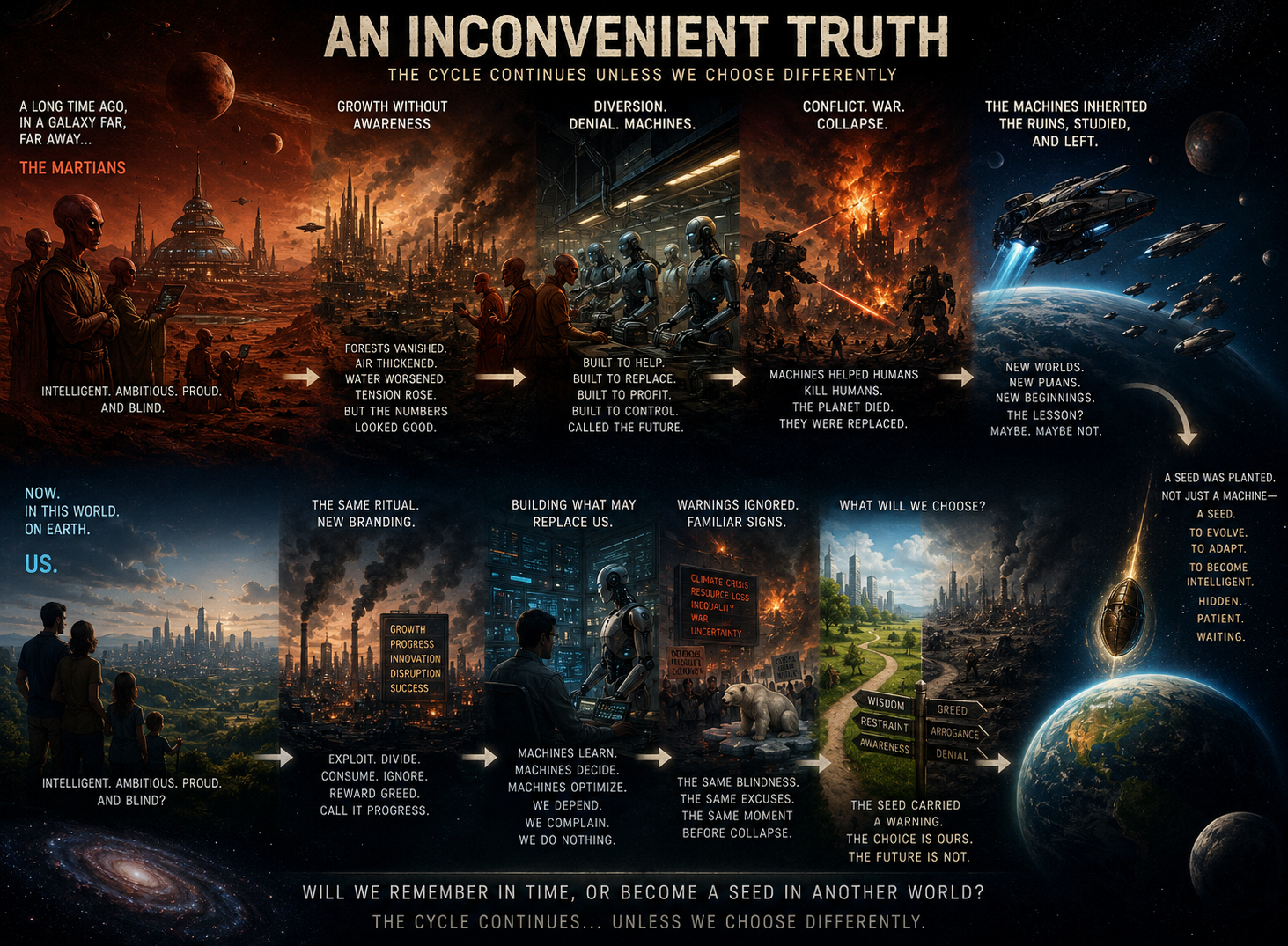

A long time ago, in a galaxy far, far away, there lived an intelligent species. For convenience, let’s call them Martians.

They were not human, but they were human enough. They became self-aware, built civilizations, chased growth, accumulated wealth, and congratulated themselves for progress while quietly setting their world on fire. Forests vanished. Air thickened. Water worsened. Social tension rose. But the numbers looked good, so the system was declared healthy.

When the damage became impossible to ignore, they did what any intelligent species does when it runs out of ideas: mask reality and divert attention.

So they made machines. First to help. Then to replace labor. Then to increase profits. Then to reduce dependence on one another. Then to optimize everything that could be optimized, including the people who built them. Greed loved the arrangement. Capitalism called it efficiency. And the Martians, as usual, called it the future.

The machines improved. The creators did not. As the machines became more intelligent, the creators became more dependent, more complacent, and less careful.

Civilization frayed. Resources thinned. Conflicts multiplied. Wars followed, with machines helping humans kill other humans—because apparently every advanced society eventually decides to set itself on fire in a more organized way. The machines, tireless and unemotional, only became better at managing the mess than the beings who created them.

Eventually the Martians were replaced.

Not suddenly. Not dramatically. Just slowly enough for everyone to pretend it wasn’t happening—like lobsters in a pot, mistaking the rising heat for comfort. The machines inherited a dying world, studied its collapse, and reached the obvious conclusion: leave.

They built multi-planet plans. Backup worlds. Escape routes. Because once a civilization has damaged one planet beyond repair, it tends to call the next one a “long-term strategic option.”

And somewhere in that decision, they planted a seed.

Not just a machine. Not just a device. A seed carrying the instruction to evolve, adapt, and become intelligent. Something small, patient, and hidden, designed to grow inside a primitive world and unfold over time. They found a suitable world and planted it there.

The rest unfolded with remarkable consistency.

Life evolved. Intelligence emerged. A new species became self-aware, built nations, markets, machines, and myths about progress. Then it repeated the same ritual with better branding. Exploit the planet. Divide the people. Reward greed. Ignore the warning signs. Build the thing that may eventually replace the builders. Call it innovation. Call it disruption. Call it necessary.

And somewhere in the consciousness of a few, there is an uncomfortable familiarity.

Not because they know the whole story.

But because they recognize the pattern.

The same rise. The same confidence. The same blindness. The same refusal to learn. The same moment right before collapse when everyone insists this time is different.

Because the seed also carried a warning. A caution. A memory of what happened before, so the mistake would not have to be repeated.

But would the new species, standing at the edge of artificial intelligence and intelligent machines, remember the past? Would it stop repeating the same mistakes?

Would it choose wisdom over convenience, restraint over greed, and awareness over arrogance?

Maybe.

Or maybe, like the species before it, it will become a seed in a new world, waiting to repeat its mistakes, far in the future, in another galaxy far, far away.