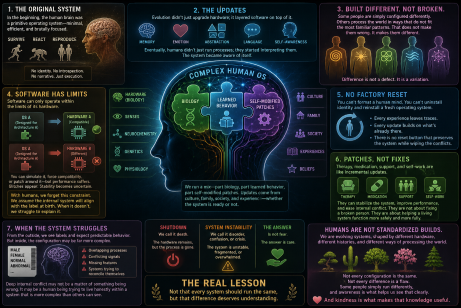

In the beginning, the human brain was a primitive operating system—minimal, efficient, and brutally focused. No identity, no introspection, no narrative. Just execution: survive, react, reproduce.

Then came the updates.

Evolution didn’t just upgrade hardware; it layered software on top of it—memory, emotion, abstraction, language. Eventually, humans didn’t just run processes; they started interpreting them. The system became aware of itself.

And that’s where things stopped being simple.

Because unlike machines, humans don’t run a single clean operating system. We run a complex mix—part biology, part learned behavior, part self-modified patches. Culture installs frameworks. Families install expectations. Society pushes updates whether the system is ready or not.

And here’s the tension: software can only operate within the limits of its hardware.

In computing, that’s obvious. You can’t install an operating system built for one architecture onto completely different hardware and expect it to function smoothly. You can simulate it, force compatibility, or patch around it—but performance suffers. Glitches appear. Stability becomes uncertain.

With humans, we often forget this constraint.

We label the hardware at the time of creation and assume the internal system will align with it. When it doesn’t—when identity, perception, or experience diverges—we struggle to explain it. Some are simply configured differently. Others process the world in ways that do not fit the most familiar patterns. That does not make them wrong. It makes them different.

And unlike machines, we don’t have a clean reset.

You can’t format a human mind. You can’t uninstall identity and reinstall a fresh operating system from scratch. There’s no factory reset button that preserves the system while wiping the conflicts. Every experience leaves traces. Every update builds on what’s already there.

What we do have are patches.

Therapy, support, and self-work can act like incremental updates. They can stabilize the system, improve performance, and ease internal conflict. Sometimes they work remarkably well. But they are not about fixing a broken person. They are about helping a living system function more safely and more fully within its own design.

And sometimes, the system still struggles.

From the outside, people see labels and expect predictable behavior. Like looking at a device and assuming you know exactly what software it runs. But inside, the configuration may be far more complex: overlapping processes, conflicting signals, missing features, or systems still trying to reconcile themselves.

So when someone experiences deep internal conflict—whether it is mental health struggles or questions of identity—it may not be a matter of something being wrong. It may simply be a human being trying to live honestly within a system that is more complex than others can see.

When the software shuts down completely, we call it death—the hardware remains, but the OS is shutdown, permanently. When the software becomes unstable, fragmented, or overwhelmed, we call it disorder, confusion, or crisis. But even then, the answer is not fear. The answer is care.

Maybe that is the real lesson—not that every system should run the same, but that difference deserves understanding.

Because humans are not standardized builds.

We are evolving systems, shaped by different hardware, different histories, and different ways of processing the world. Not every configuration is the same, and not every difference is a flaw. Some people simply run differently, and awareness is what helps us see that clearly.

And kindness is what makes that knowledge useful.